import pandas as pd

import geopandas as gpd

import numpy as np

import matplotlib.pyplot as plt

import esda

import libpysal as lps

import contextily as cxLocal Spatial Autocorrelation

In today’s lecture, we will delve into the concept of local spatial autocorrelation, an essential tool in geographic data science that allows us to explore spatial patterns at a more granular level. While global measures like Moran’s I provide an overall summary of spatial autocorrelation across an entire region, local spatial autocorrelation helps identify specific areas where values cluster or where unusual spatial patterns emerge. By examining local indicators of spatial association (LISA), we can detect hotspots, cold spots, and outliers, which are critical for understanding localized spatial phenomena. This approach is particularly valuable in fields like public health, urban studies, and environmental monitoring, where localized insights can inform targeted interventions.

We first review the basics of global spatial autocorrelation and then take a deeper dive into local spatial autocorrelation.

Imports

import seaborn as sns

sns.set_context('notebook')

%matplotlib inline

import warnings

warnings.simplefilter("ignore")Data

For this exercise, we’ll use two datasets:

- a set of polygons (census tracts) for the city of San Diego from the US Census American Community Survey 5-year estimates.

Census Polygons

scag = gpd.read_parquet("~/data/scag_region.parquet")san_diego = scag[scag.geoid.str[:5]=='06073']san_diego.info()<class 'geopandas.geodataframe.GeoDataFrame'>

Index: 627 entries, 158 to 4567

Columns: 194 entries, geoid to geometry

dtypes: float64(191), geometry(1), int64(1), object(1)

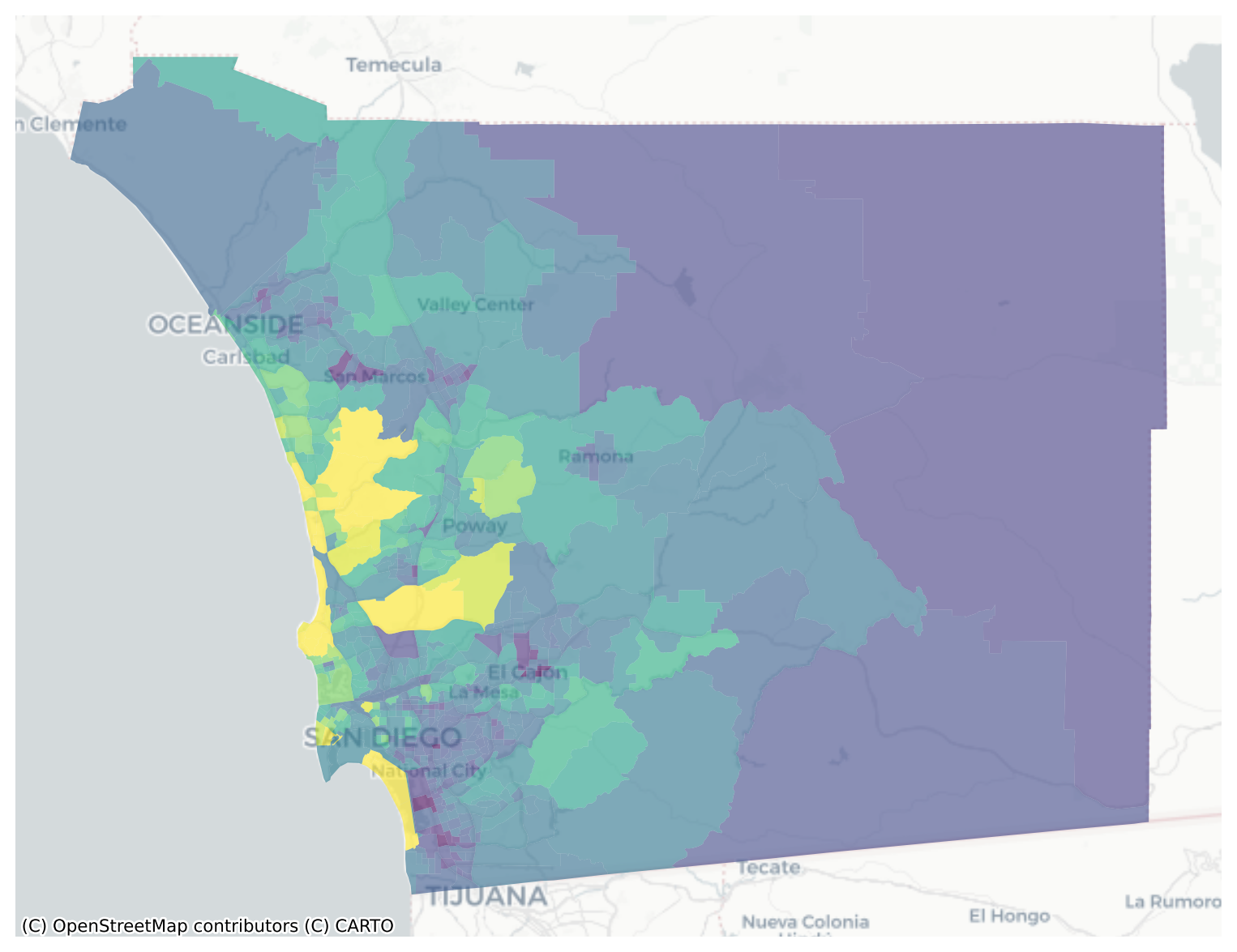

memory usage: 955.2+ KBsan_diego = san_diego.dropna(subset=['median_home_value'])san_diego = san_diego.to_crs(epsg=3857)f, ax = plt.subplots(figsize=(10,10))

san_diego.plot('median_home_value', ax=ax, alpha=0.6)

cx.add_basemap(ax, crs=san_diego.crs.to_string(), source=cx.providers.CartoDB.Positron)

ax.axis('off')(np.float64(-13099175.999157175),

np.float64(-12913655.059545828),

np.float64(3827188.049586126),

np.float64(3968975.95342782))

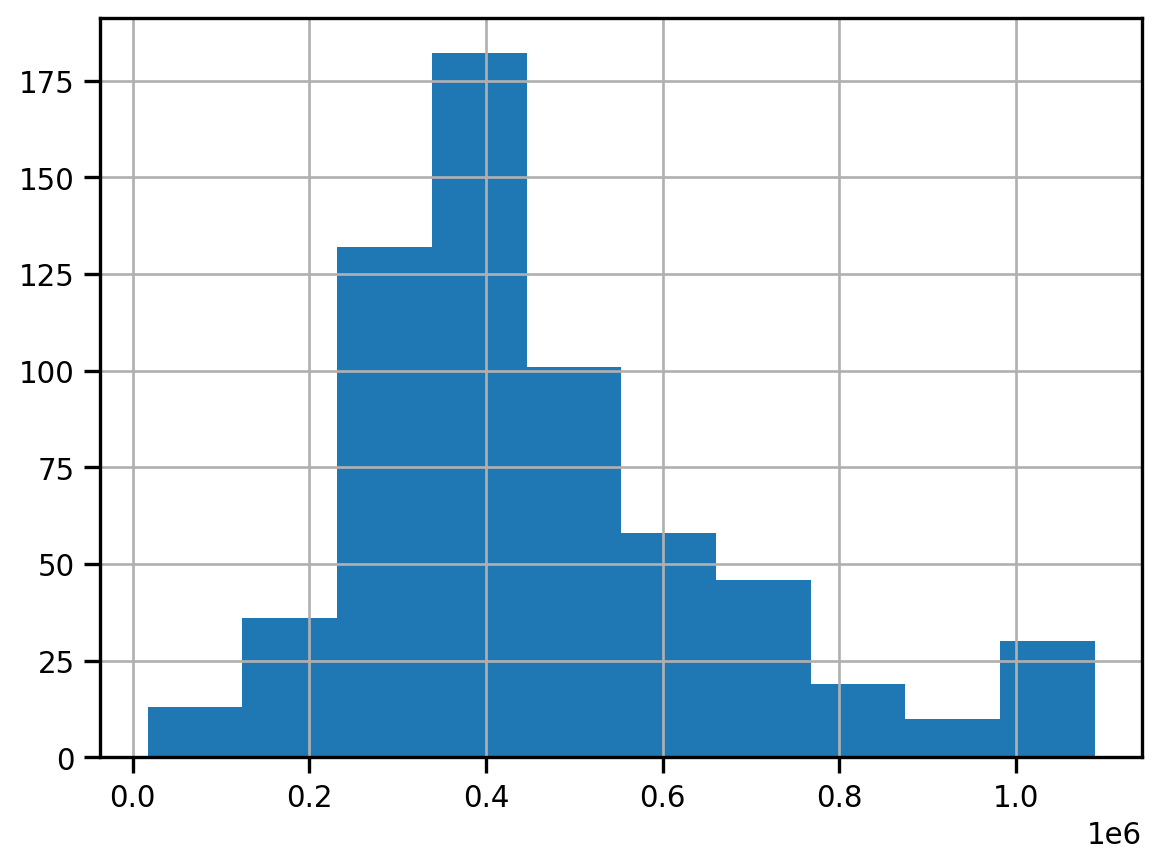

san_diego.median_home_value.hist()

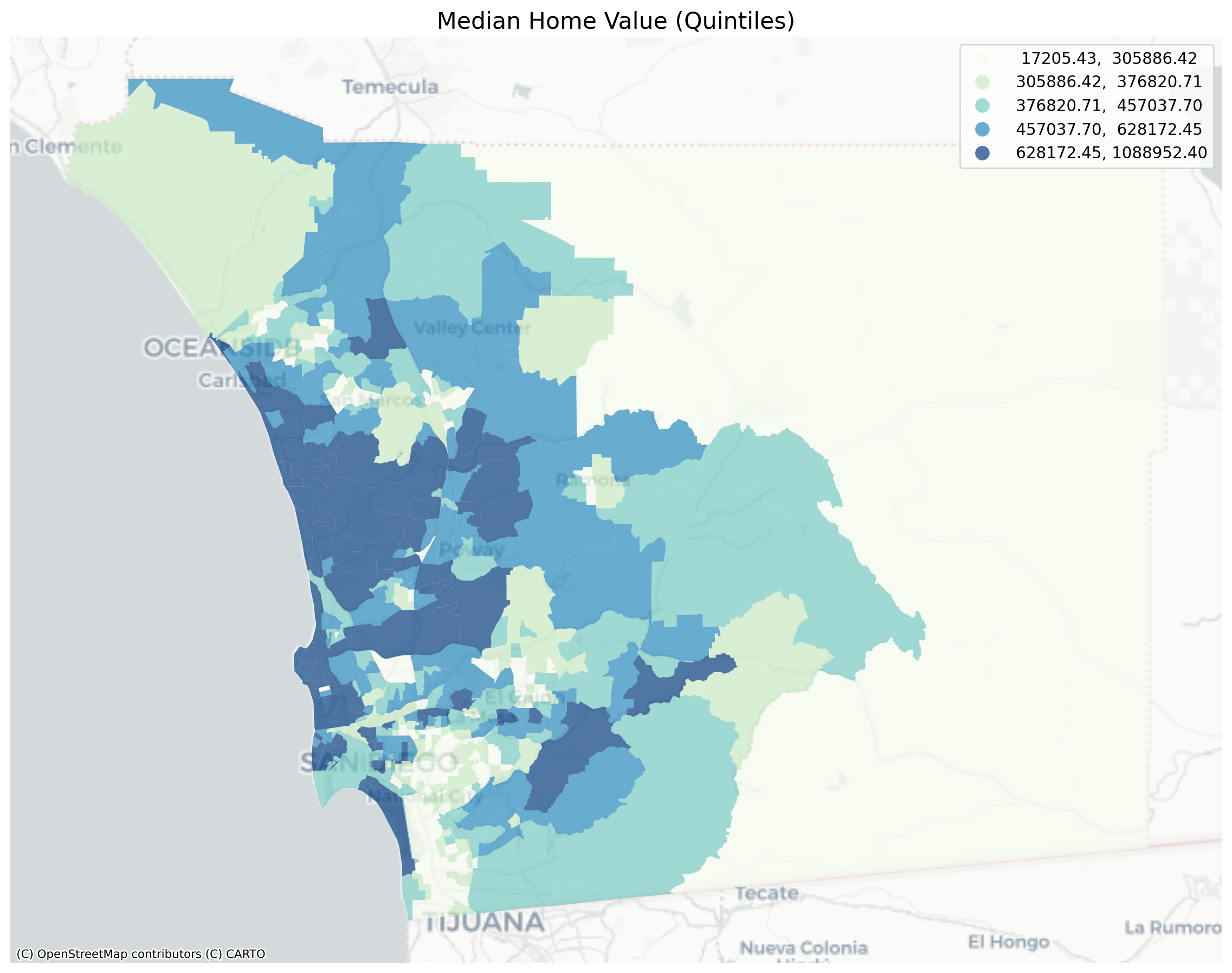

fig, ax = plt.subplots(figsize=(12,12))

san_diego.dropna(subset=['median_home_value']).to_crs(epsg=3857).plot('median_home_value', legend=True, scheme='quantiles', cmap='GnBu', k=5, ax=ax, alpha=0.7)

cx.add_basemap(ax, crs=san_diego.crs.to_string(), source=cx.providers.CartoDB.Positron)

ax.axis('off')

plt.title("Median Home Value (Quintiles)", fontsize=16)

plt.axis('off')

plt.tight_layout()

Global Spatial Autocorrelation

Visual inspection of the map pattern for the prices allows us to search for spatial structure. If the spatial distribution of the prices was random, then we should not see any clustering of similar values on the map. However, our visual system is drawn to the darker clusters along the coast, and a concentration of the lighter hues (lower prices) in the north central and south east. In the point data, the trees are too dense to make any sense of the pattern

Our brains are very powerful pattern recognition machines. However, sometimes they can be too powerful and lead us to detect false positives, or patterns where there are no statistical patterns. This is a particular concern when dealing with visualization of irregular polygons of differning sizes and shapes.

The concept of spatial autocorrelation relates to the combination of two types of similarity: spatial similarity and attribute similarity. Although there are many different measures of spatial autocorrelation, they all combine these two types of simmilarity into a summary measure.

Let’s use PySAL to generate these two types of similarity measures.

Spatial Similarity

We have already encountered spatial weights in a previous notebook. In spatial autocorrelation analysis, the spatial weights are used to formalize the notion of spatial similarity. As we have seen there are many ways to define spatial weights, here we will use queen contiguity:

wq = lps.weights.Queen.from_dataframe(san_diego)

wq.transform = 'r'Attribute Similarity

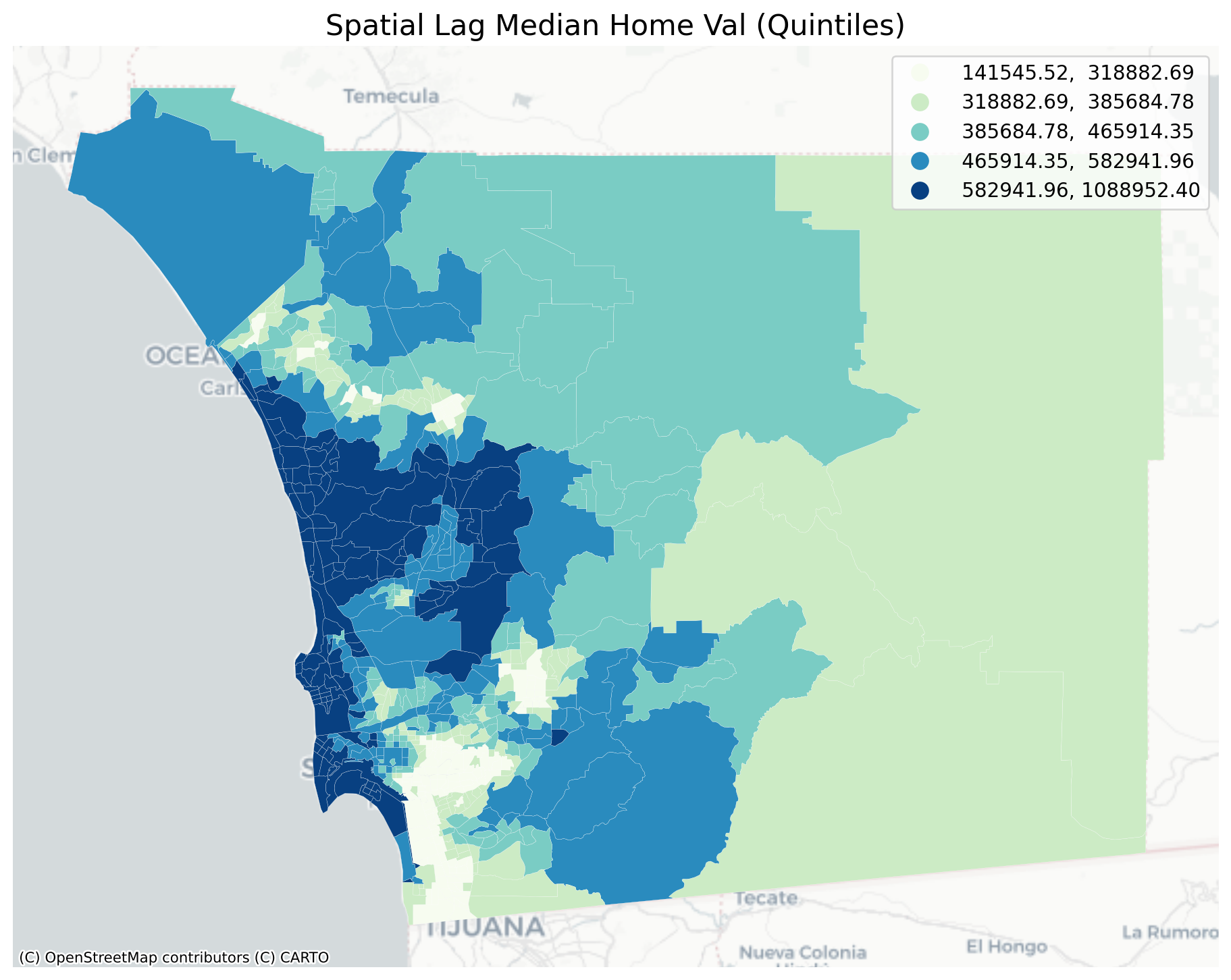

So the spatial weight between neighborhoods \(i\) and \(j\) indicates if the two are neighbors (i.e., geographically similar). What we also need is a measure of attribute similarity to pair up with this concept of spatial similarity. The spatial lag is a derived variable that accomplishes this for us. For neighborhood \(i\) the spatial lag is defined as: \[ylag_i = \sum_j w_{i,j} y_j\]

y = san_diego['median_home_value']

ylag = lps.weights.lag_spatial(wq, y)f, ax = plt.subplots(1, figsize=(12, 12))

san_diego.assign(cl=ylag).plot(column='cl', scheme='quantiles', \

k=5, cmap='GnBu', linewidth=0.1, ax=ax, \

edgecolor='white', legend=True)

cx.add_basemap(ax, crs=san_diego.crs.to_string(), source=cx.providers.CartoDB.Positron)

ax.axis('off')

plt.title("Spatial Lag Median Home Val (Quintiles)", fontsize=16)

plt.show()

The quintile map for the spatial lag tends to enhance the impression of value similarity in space. It is, in effect, a local smoother.

san_diego['lag_median_pri'] = ylag

f,ax = plt.subplots(1,2,figsize=(12,4))

san_diego.plot(column='median_home_value', ax=ax[0],

scheme="quantiles", k=5, cmap='GnBu')

#ax[0].axis(san_diego.total_bounds[np.asarray([0,2,1,3])])

ax[0].set_title("Price", fontsize=16)

san_diego.plot(column='lag_median_pri', ax=ax[1],

scheme='quantiles', cmap='GnBu', k=5)

cx.add_basemap(ax[0], crs=san_diego.crs.to_string(), source=cx.providers.CartoDB.Positron)

cx.add_basemap(ax[1], crs=san_diego.crs.to_string(), source=cx.providers.CartoDB.Positron)

ax[1].set_title("Spatial Lag Price", fontsize=16)

ax[0].axis('off')

ax[1].axis('off')

plt.show()

However, we still have the challenge of visually associating the value of the prices in a neighborhod with the value of the spatial lag of values for the focal unit. The latter is a weighted average of home prices in the focal county’s neighborhood.

To complement the geovisualization of these associations we can turn to formal statistical measures of spatial autocorrelation.

Testing for Global Spatial Autocorrelation

Join counts

One way to formalize a test for spatial autocorrelation in a binary attribute is to consider the so-called joins. A join exists for each neighbor pair of observations, and the joins are reflected in our binary spatial weights object wq.

Each unit can take on one of two values “Black” or “White”, analogous to the layout of a chessboard

nrows, ncols = 9,9

image = np.zeros(nrows*ncols)

# Set every other cell to 1

image[::2] = 1

# Reshape things into a 9x9 grid.

image = image.reshape((nrows, ncols))

plt.matshow(image, cmap='Greys')

plt.axis('off')

plt.show()

and so for a given pair of neighboring locations there are three different types of joins that can arise:

- Black Black (BB)

- White White (WW)

- Black White (or White Black) (BW)

We can use the esda package from PySAL to carry out join count analysis. In the case of our point data, the join counts can help us determine whether different varieties of trees tend to grow together, spread randomly through space, or compete with one another for precious resources

Polygon Data

With polygon data, we can conduct an analysis using a contiguity matrix. For our housing price data, we need to first discretize the variable we’re using; to keep things simple, we’ll binarize our price data using the median so that “high” values are tracts whose median home price is above the city’s median and “low” values are those below

y.median()np.float64(405416.57303370786)san_diego.shape(627, 195)yb = y > y.median()

sum(yb)313yb = y > y.median()

labels = ["0 Low", "1 High"]

yb = [labels[i] for i in 1*yb]

san_diego['yb'] = ybfig, ax = plt.subplots(figsize=(12,12))

san_diego.plot(column='yb', cmap='binary', edgecolor='grey', legend=True, ax=ax)

The spatial distribution of the binary variable immediately raises questions about the juxtaposition of the “black” and “white” areas.

Given that we have 308 Black polygons on our map, what is the number of Black Black (BB) joins we could expect if the process were such that the Black polygons were randomly assigned on the map?

yb = 1 * (y > y.median()) # convert back to binary

wq = lps.weights.Queen.from_dataframe(san_diego)

wq.transform = 'b'

np.random.seed(12345)

jc = esda.join_counts.Join_Counts(yb, wq)The resulting object stores the observed counts for the different types of joins:

jc.bbnp.float64(754.0)jc.wwnp.float64(745.0)jc.bwnp.float64(475.0)Note that the three cases exhaust all possibilities:

jc.bb + jc.ww + jc.bwnp.float64(1974.0)and

wq.s0 / 2np.float64(1974.0)which is the unique number of joins in the spatial weights object.

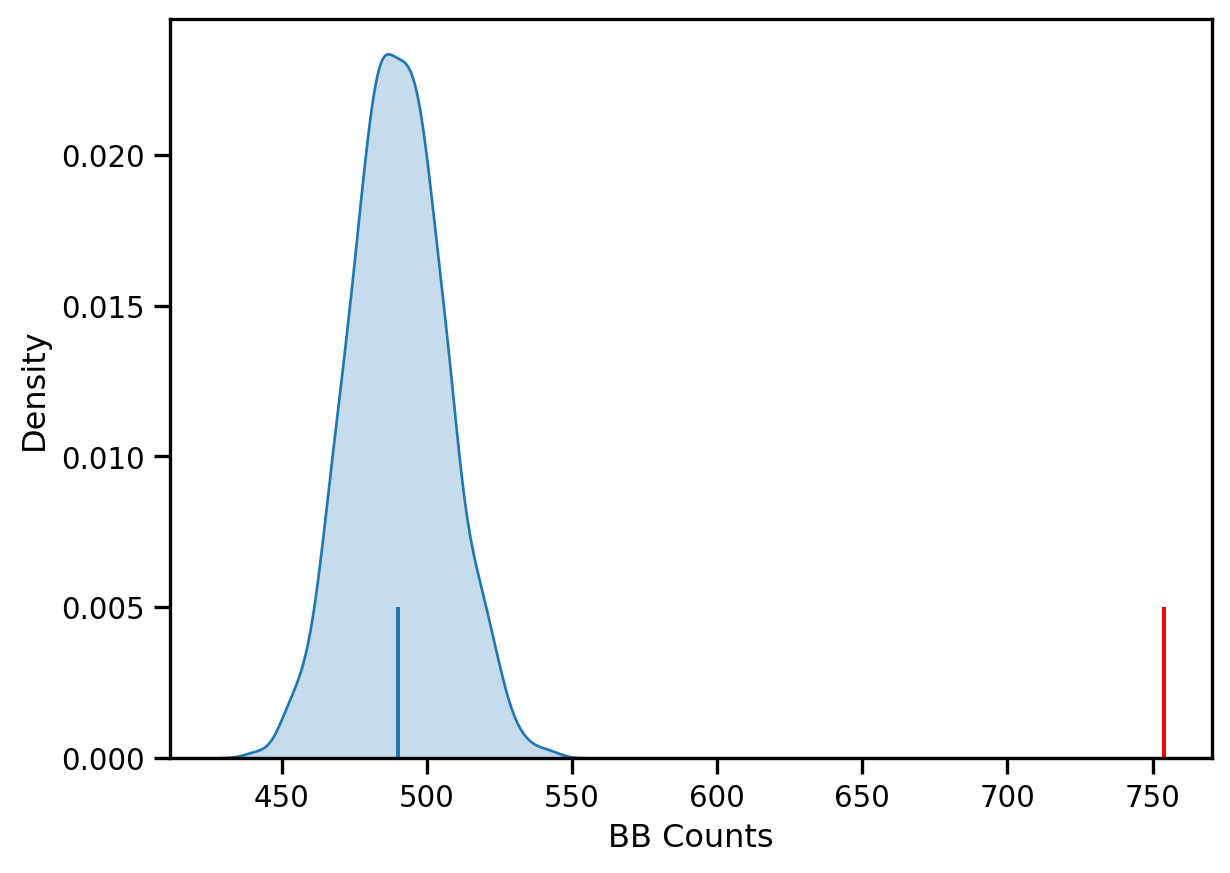

Our object tells us we have observed 736 BB joins:

jc.bbnp.float64(754.0)The critical question for us, is whether this is a departure from what we would expect if the process generating the spatial distribution of the Black polygons were a completely random one? To answer this, PySAL uses random spatial permutations of the observed attribute values to generate a realization under the null of complete spatial randomness (CSR). This is repeated a large number of times (999 default) to construct a reference distribution to evaluate the statistical significance of our observed counts.

The average number of BB joins from the synthetic realizations is:

jc.mean_bbnp.float64(490.03103103103103)which is less than our observed count. The question is whether our observed value is so different from the expectation that we would reject the null of CSR?

import seaborn as sbn

sbn.kdeplot(jc.sim_bb, shade=True)

plt.vlines(jc.bb, 0, 0.005, color='r')

plt.vlines(jc.mean_bb, 0,0.005)

plt.xlabel('BB Counts')Text(0.5, 0, 'BB Counts')

The density portrays the distribution of the BB counts, with the black vertical line indicating the mean BB count from the synthetic realizations and the red line the observed BB count for our prices. Clearly our observed value is extremely high. A pseudo p-value summarizes this:

jc.p_sim_bbnp.float64(0.001)Since this is below conventional significance levels, we would reject the null of complete spatial randomness in favor of spatial autocorrelation in market prices.

Continuous Case

The join count analysis is based on a binary attribute, which can cover many interesting empirical applications where one is interested in presence and absence type phenomena. In our case, we artificially created the binary variable, and in the process we throw away a lot of information in our originally continuous attribute. Turning back to the original variable, we can explore other tests for spatial autocorrelation for the continuous case.

First, we transform our weights to be row-standardized, from the current binary state:

wq.transform = 'r'y = san_diego['median_home_value']Moran’s I is a test for global autocorrelation for a continuous attribute:

np.random.seed(12345)

mi = esda.moran.Moran(y, wq)

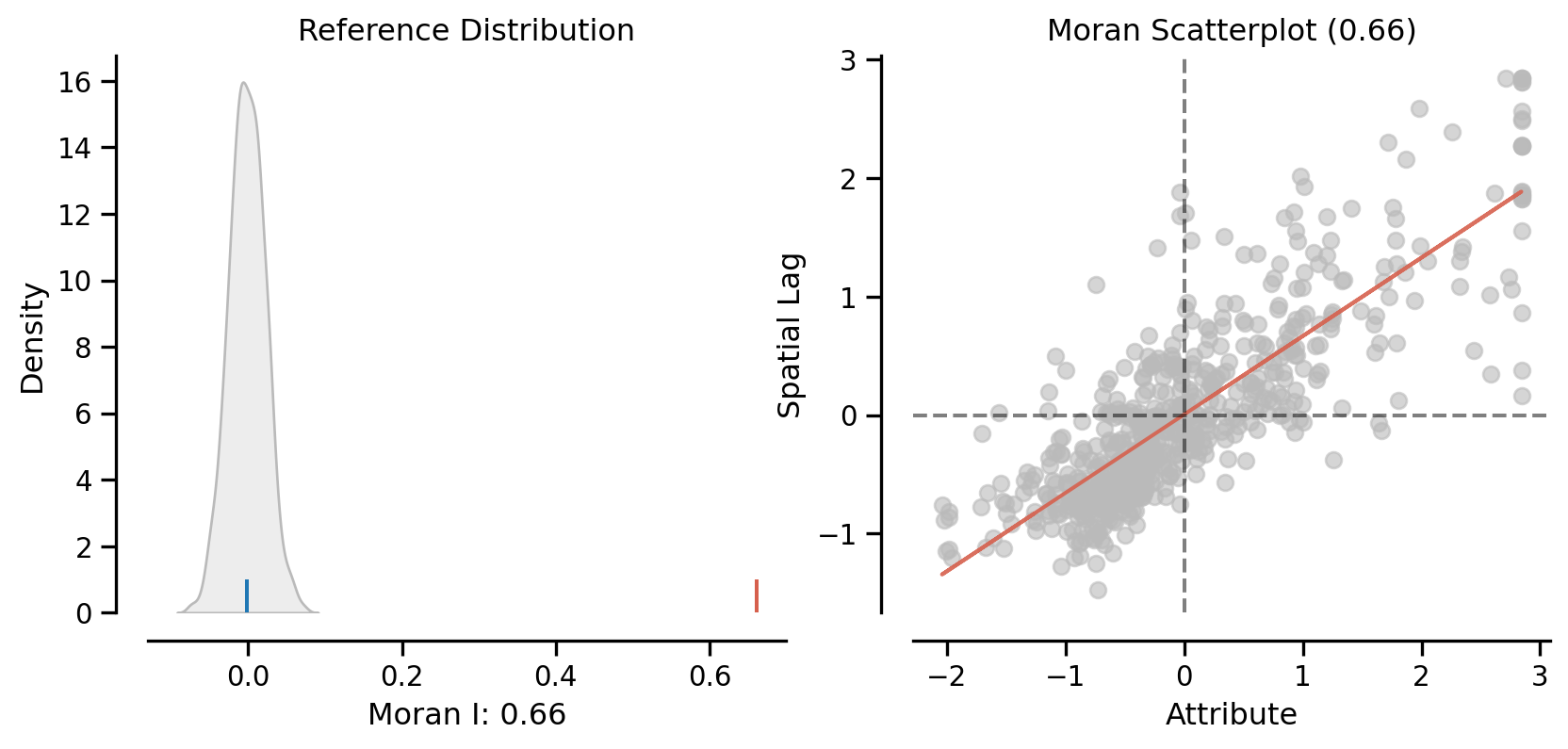

mi.Inp.float64(0.660917168991019)Again, our value for the statistic needs to be interpreted against a reference distribution under the null of CSR. PySAL uses a similar approach as we saw in the join count analysis: random spatial permutations.

from splot.esda import plot_moran

plot_moran(mi, zstandard=True, figsize=(10,4))

plt.show()

mi.p_simnp.float64(0.001)On the left, we have the reference distribution versus the observed statistic; on the right, we have a plot of the focal value against its spatial lag, for which the Moran I statistic serves as the slope

Here our observed value is again in the upper tail

mi.p_simnp.float64(0.001)Local Autocorrelation: Hot Spots, Cold Spots, and Spatial Outliers

Local autocorrelation statistics provide indications of spatial autocorrelation in a subset of the data. With \(n\) observations, there are \(n\) such subsets, with each subset consisting of a focal unit together with the neighbors of the focal unit.

PySAL has many local autocorrelation statistics. Let’s compute a local Moran statistic for the same data

wq.transform = 'r'

lag_price = lps.weights.lag_spatial(wq, san_diego['median_home_value'])np.random.seed(12345)

li = esda.moran.Moran_Local(y, wq)Now, instead of a single \(I\) statistic, we have an array of local \(I_i\) statistics, stored in the .Is attribute, and p-values from the simulation are in p_sim.

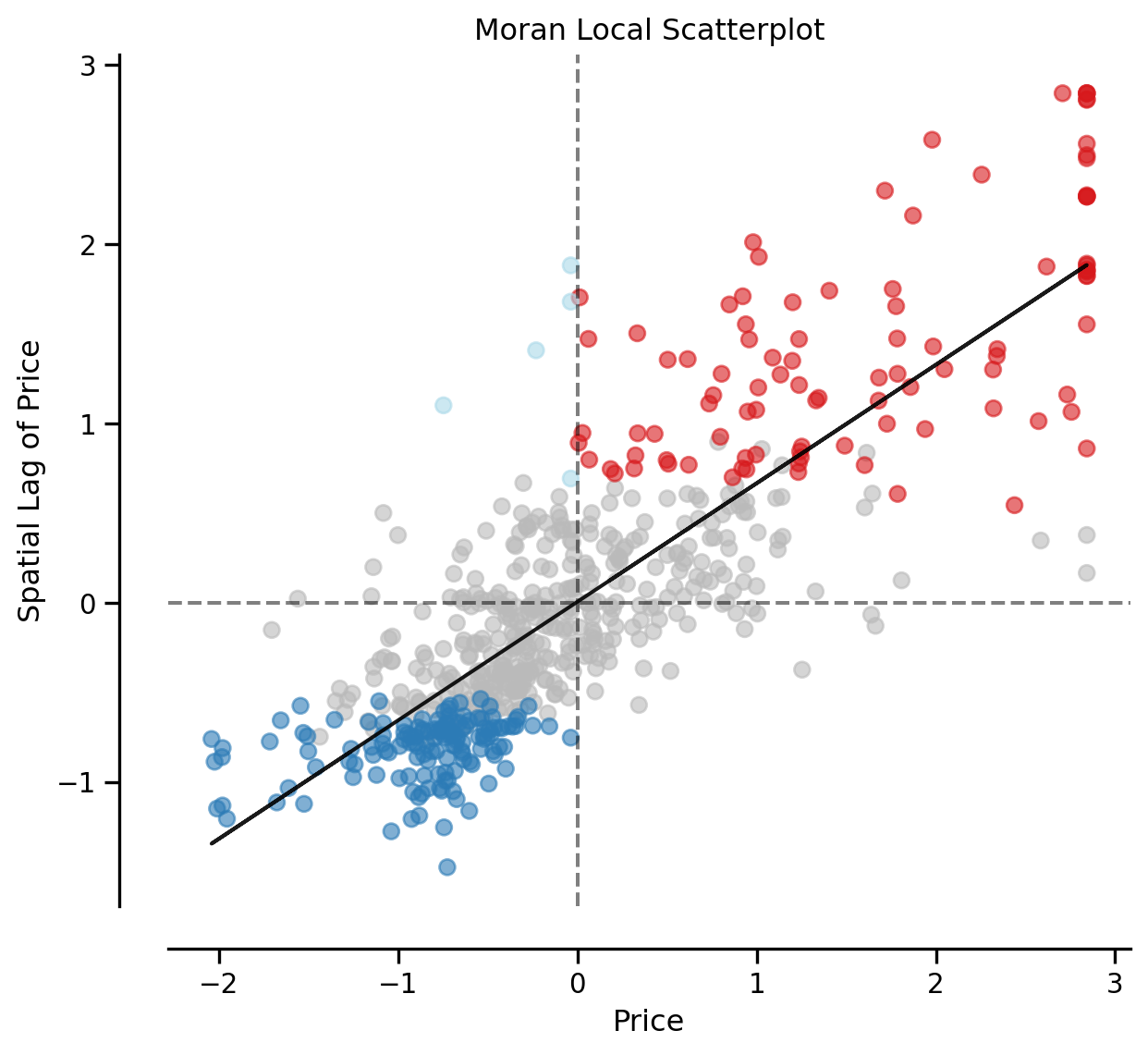

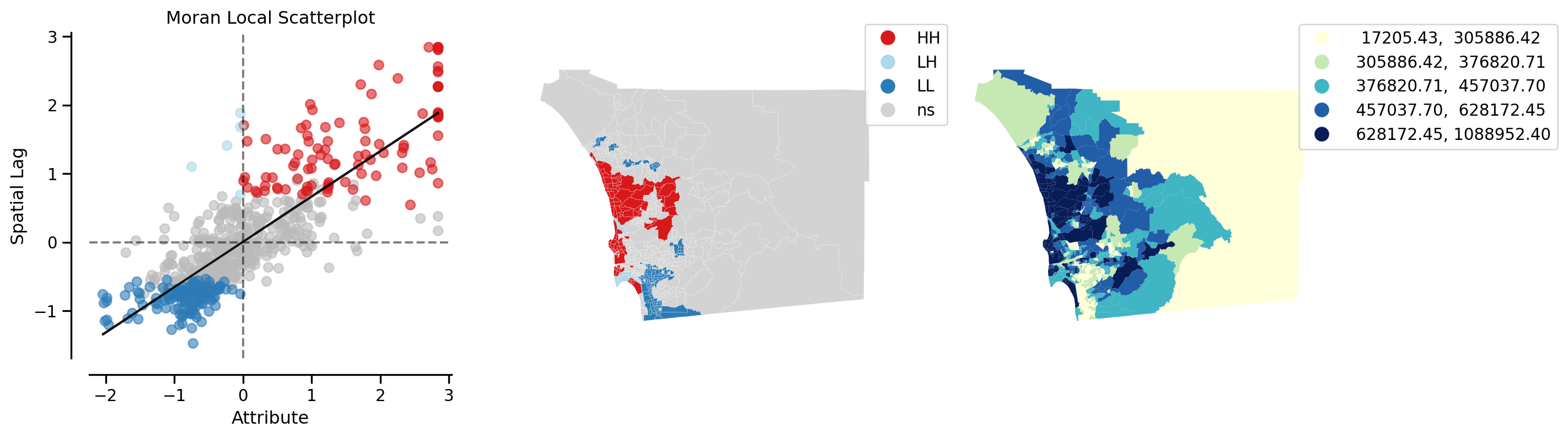

from splot.esda import moran_scatterplot

fig, ax = moran_scatterplot(li)

ax.set_xlabel('Price')

ax.set_ylabel('Spatial Lag of Price')

plt.show()

We can again test for local clustering using permutations, but here we use conditional random permutations (different distributions for each focal location)

(li.p_sim < 0.05).sum()np.int64(246)fig, ax = moran_scatterplot(li, p=0.05)

ax.set_xlabel('Price')

ax.set_ylabel('Spatial Lag of Price')

plt.show()

We can distinguish the specific type of local spatial association reflected in the four quadrants of the Moran Scatterplot above: - High-High (upper right) - Low-Low (bottom left) - High-Low (lower right) - Low-High (upper left)

This information is encoded in the q attribution of the Local Moran object:

li.qarray([4, 1, 2, 3, 3, 3, 3, 4, 3, 3, 1, 3, 3, 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 2, 3, 2, 2, 1, 1, 2, 3, 3, 3, 3, 3, 2, 1, 3, 3, 3, 2, 1, 1, 1,

3, 1, 3, 3, 4, 3, 1, 1, 3, 3, 3, 1, 3, 3, 1, 3, 3, 3, 3, 3, 1, 2,

2, 1, 2, 4, 3, 3, 4, 3, 3, 3, 3, 3, 3, 1, 1, 1, 1, 3, 3, 3, 1, 1,

3, 3, 2, 2, 2, 1, 4, 3, 3, 3, 3, 3, 3, 3, 3, 1, 1, 1, 1, 3, 3, 2,

2, 4, 3, 3, 1, 3, 3, 3, 3, 1, 1, 3, 3, 3, 3, 1, 3, 3, 1, 1, 1, 3,

1, 3, 3, 3, 1, 1, 3, 3, 3, 1, 1, 3, 3, 3, 3, 1, 3, 3, 1, 3, 3, 1,

1, 3, 3, 3, 3, 1, 1, 3, 3, 4, 3, 3, 3, 3, 3, 3, 3, 3, 1, 3, 1, 1,

1, 1, 1, 2, 1, 2, 1, 1, 2, 1, 3, 3, 2, 3, 4, 4, 2, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 1, 3, 3, 2, 1, 1, 2, 3, 3, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 3, 3, 3, 4, 1, 3, 3, 1, 2, 4, 3, 3, 3, 3, 3, 3, 1, 2, 3, 2,

2, 2, 3, 3, 3, 3, 3, 3, 1, 1, 1, 2, 4, 3, 3, 1, 3, 3, 3, 3, 3, 1,

3, 3, 3, 2, 1, 1, 1, 3, 4, 3, 3, 3, 3, 3, 3, 3, 3, 3, 1, 3, 3, 4,

1, 1, 1, 1, 3, 1, 3, 4, 4, 4, 3, 2, 1, 1, 2, 3, 3, 2, 3, 4, 3, 3,

3, 3, 3, 4, 3, 3, 4, 1, 3, 3, 3, 4, 1, 3, 3, 3, 3, 3, 4, 4, 3, 2,

3, 3, 1, 1, 4, 4, 3, 3, 3, 3, 3, 3, 2, 3, 3, 3, 3, 1, 1, 2, 3, 4,

1, 1, 3, 3, 1, 3, 3, 3, 2, 3, 3, 3, 4, 3, 1, 3, 3, 2, 1, 2, 3, 3,

4, 3, 3, 3, 3, 1, 1, 1, 3, 3, 3, 1, 1, 3, 3, 4, 1, 1, 1, 1, 2, 1,

1, 2, 3, 3, 3, 3, 4, 2, 1, 1, 1, 1, 1, 3, 3, 3, 1, 1, 2, 4, 1, 3,

3, 3, 2, 1, 2, 3, 3, 3, 3, 3, 3, 2, 3, 3, 3, 4, 2, 1, 1, 3, 3, 1,

3, 3, 3, 3, 3, 2, 3, 2, 1, 3, 3, 3, 3, 3, 3, 3, 3, 1, 3, 1, 2, 1,

1, 1, 1, 3, 3, 3, 3, 1, 1, 2, 1, 1, 1, 1, 1, 1, 2, 1, 3, 3, 1, 1,

1, 1, 3, 1, 3, 3, 2, 3, 1, 1, 3, 3, 3, 3, 4, 2, 1, 1, 1, 2, 3, 1,

3, 3, 1, 1, 3, 3, 1, 2, 3, 3, 3, 3, 3, 3, 1, 1, 3, 3, 3, 1, 1, 1,

3, 3, 1, 4, 1, 3, 1, 2, 1, 3, 1, 3, 3, 3, 3, 4, 3, 3, 4, 1, 4, 3,

3, 3, 3, 2, 3, 1, 1, 4, 1, 1, 1, 1, 3, 3, 1, 3, 3, 2, 2, 3, 2, 4,

1, 1, 1, 1, 3, 3, 4, 1, 3, 1, 2, 3, 1, 1, 1, 1, 2, 2, 3, 3, 3, 1,

3, 2, 1, 1, 3, 1, 1, 3, 3, 1, 3, 1, 1, 1, 3, 3, 3, 3, 1, 3, 1, 4,

4, 3, 3, 3, 3, 3, 1, 3, 4, 3, 3])Hot Spots

The local statistic can be used to identify so-called “hot spots”. To be a hot spot, a focal unit has to satisfy two conditions:

- It is in the High-High quadrant of the Moran Scatter Plot

- It has a statistically significant Local Moran

hotspot = (li.p_sim < 0.05) * (li.q==1)

print(f"There are {hotspot.sum()} hot spots")There are 98 hot spotsCold Spots

A second type of positive local spatial autocorrelation occurs in the case of “cold spots”. To be a cold spot, a focal unit has to satisfy two conditions:

- It is in the Low-Low quadrant of the Moran Scatter Plot

- It has a statistically significant Local Moran

coldspot = (li.p_sim < 0.05) * (li.q==3)

print(f"There are {coldspot.sum()} cold spots")There are 143 cold spotsSpatial Outliers

While hot spots and cold spots reflect positive local spatial autocorrelation, we know that another departure from spatial randomness can occur: negative spatial autocorrelation. This is when the value dissimilarity between the focal unit and those of its neighbors is larger than what could be due to random chance.

There are two types of spatial outliers.

A doughnut (the kind with a hole) is a spatial outlier where the focal unit is low, but the neighboring values are high. This would place the observation in quadrant 2 of the scatter plot (LH). The position is a necessary, but not sufficient, condition for being classified as a doughnut. The local Moran value must also be statistically significant.

doughnut = (li.p_sim < 0.05) * (li.q==2)

print(f"There are {doughnut.sum()} doughnuts")There are 5 doughnutsThe second type of spatial outlier is the “diamond in the rough”. Here the focal value is high, but the neighboring values are low, and the local Moran value is statistically significant:

diamond = (li.p_sim < 0.05) * (li.q==4)

print(f"There are {diamond.sum()} diamonds")There are 0 diamondsIn this case, we do not have any diamond observations, despite having

(li.q==4).sum()np.int64(44)observations in the HL quadrant of the scatter plot. In other words, all 44 of these observations have local statistics that are not significantly different from their expectation under the null of spatial randomness.

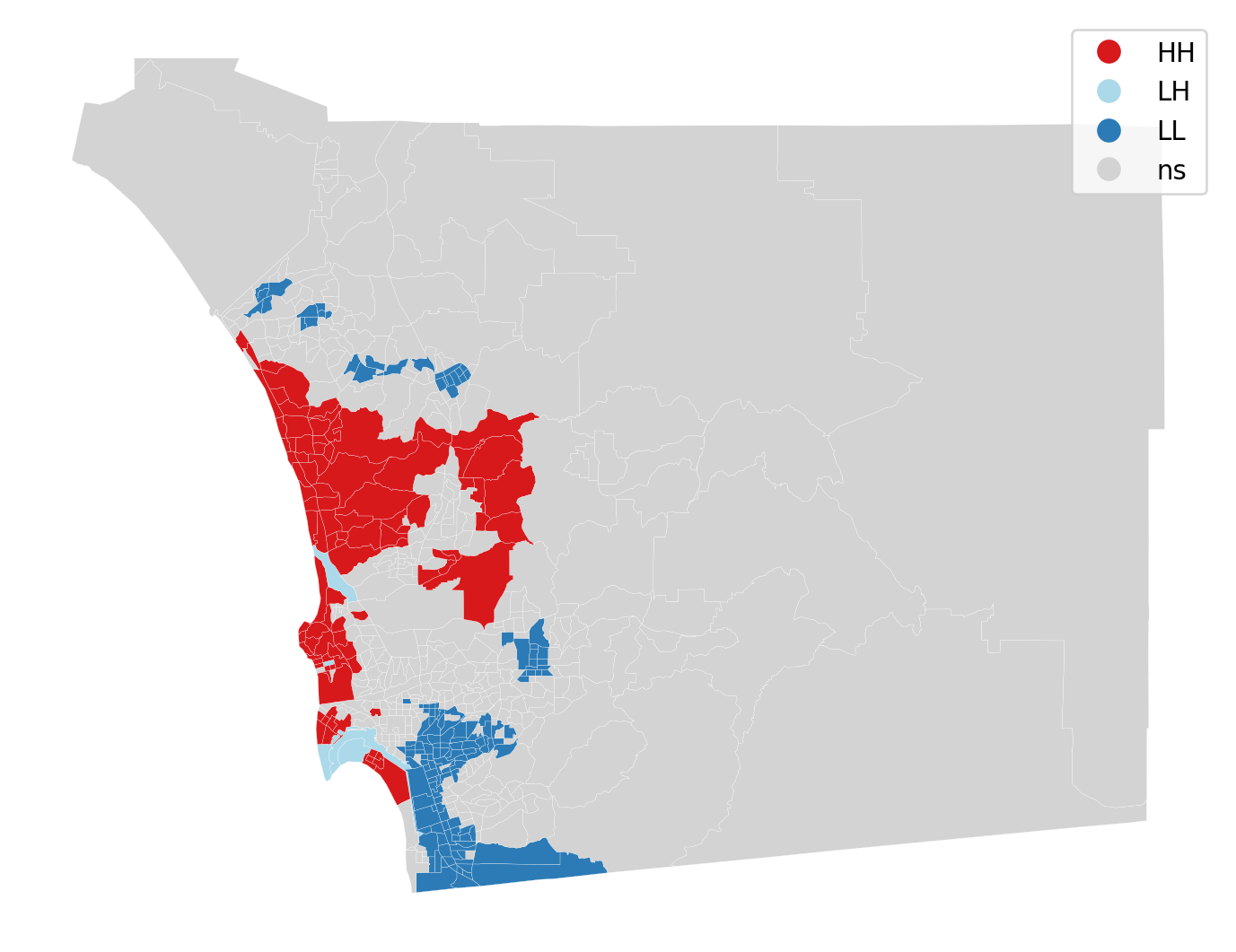

Cluster Map

Using splot, we can also plot these hot spots, cold spots, and spatial outliers on the original geodataframe

from splot.esda import lisa_cluster

lisa_cluster(li, san_diego, p=0.05, figsize = (9,9))

plt.show()

The cluster map displays the locations having a statistically significant LISA value together with a label for the quadrant of the scatter plot. All other locations are colored grey and labeled as “ns” (not significant).

from splot.esda import plot_local_autocorrelation

plot_local_autocorrelation(li, san_diego, 'median_home_value')(<Figure size 1440x384 with 3 Axes>,

array([<Axes: title={'center': 'Moran Local Scatterplot'}, xlabel='Attribute', ylabel='Spatial Lag'>,

<Axes: >, <Axes: >], dtype=object))

Recall that we now have \(n\) statistics, one for each location. This is in contrast to the case of global autocorrelation where there was one statistic for all \(n\) locations. We can thus examine the statistical significance of any location with the local indicators:

np.random.seed(12345)

li = esda.moran.Moran_Local(y, wq, keep_simulations=True)li.p_sim[0:10]array([0.46 , 0.274, 0.46 , 0.046, 0.482, 0.475, 0.03 , 0.442, 0.203,

0.003])li.p_sim[9]np.float64(0.003)null = li.sim

observed = li.Is[9]null = li.sim[626]

observed = li.Is[626]

import seaborn as sbn

sbn.kdeplot(null, shade=True)

plt.vlines(observed,0, 0.2, color='r')

plt.xlabel("Local I for tract 9")Text(0.5, 0, 'Local I for tract 9')

A note on spatial clusters

It is important to note that only the focal units are symbolized on the cluster map. Because the LISA tells us about the spatial association between the value at the focal unit and the values in its neighboring units, the temptation is to include the neighboring values together with the focal unit, if the latter is significant. This would, however, be misleading in the case of a significant focal unit who had neighbors, some of which had LISA values that were not significant.

Instead, the focal unit with a significant LISA forms the seed of a cluster. When it is contiguous to a different focal unit with a significant LISA of the same form, the cluster will consist of multiple spatial units. Such a connected component is a spatial cluster in the fullest sense.

We can identify these spatial clusters in multiple steps.

First, we will create a categorical variable to identify the cluster type. In general our encoding looks like:

- Not significant: 0

- Hot spot: 1

- Doughnut: 2

- Cold spot

- Diamond: 4

cluster_type = hotspot * 1 + doughnut * 2 + coldspot * 3 + diamond * 4

san_diego['cluster_type'] = cluster_type

san_diego.groupby(by='cluster_type').count()| geoid | n_asian_under_15 | n_black_under_15 | n_hispanic_under_15 | n_native_under_15 | n_white_under_15 | n_persons_under_18 | n_asian_over_60 | n_black_over_60 | n_hispanic_over_60 | ... | p_nonhisp_white_persons | p_white_over_60 | p_black_over_60 | p_hispanic_over_60 | p_native_over_60 | p_asian_over_60 | p_disabled | geometry | lag_median_pri | yb | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| cluster_type | |||||||||||||||||||||

| 0 | 381 | 381 | 381 | 381 | 381 | 381 | 381 | 0 | 0 | 0 | ... | 381 | 0 | 0 | 0 | 0 | 0 | 0 | 381 | 381 | 381 |

| 1 | 98 | 98 | 98 | 98 | 98 | 98 | 98 | 0 | 0 | 0 | ... | 98 | 0 | 0 | 0 | 0 | 0 | 0 | 98 | 98 | 98 |

| 2 | 5 | 5 | 5 | 5 | 5 | 5 | 5 | 0 | 0 | 0 | ... | 5 | 0 | 0 | 0 | 0 | 0 | 0 | 5 | 5 | 5 |

| 3 | 143 | 143 | 143 | 143 | 143 | 143 | 143 | 0 | 0 | 0 | ... | 143 | 0 | 0 | 0 | 0 | 0 | 0 | 143 | 143 | 143 |

4 rows × 196 columns

Note that in our example, we did not encounter any diamons, so that cluster type is absent.

We will reset the index to facilitate later steps:

san_diego =san_diego.set_index('geoid')We can now create a categorical map for our cluster types:

san_diego.explore(column='cluster_type', categorical=True,

legend=True,

tooltip=['geoid', 'cluster_type'])To determine the spatial clusters, we will consider the intersection of two neighbor graphs:

- Queen contiguity

- Cluster

The second neighbor graph is constructed as follows:

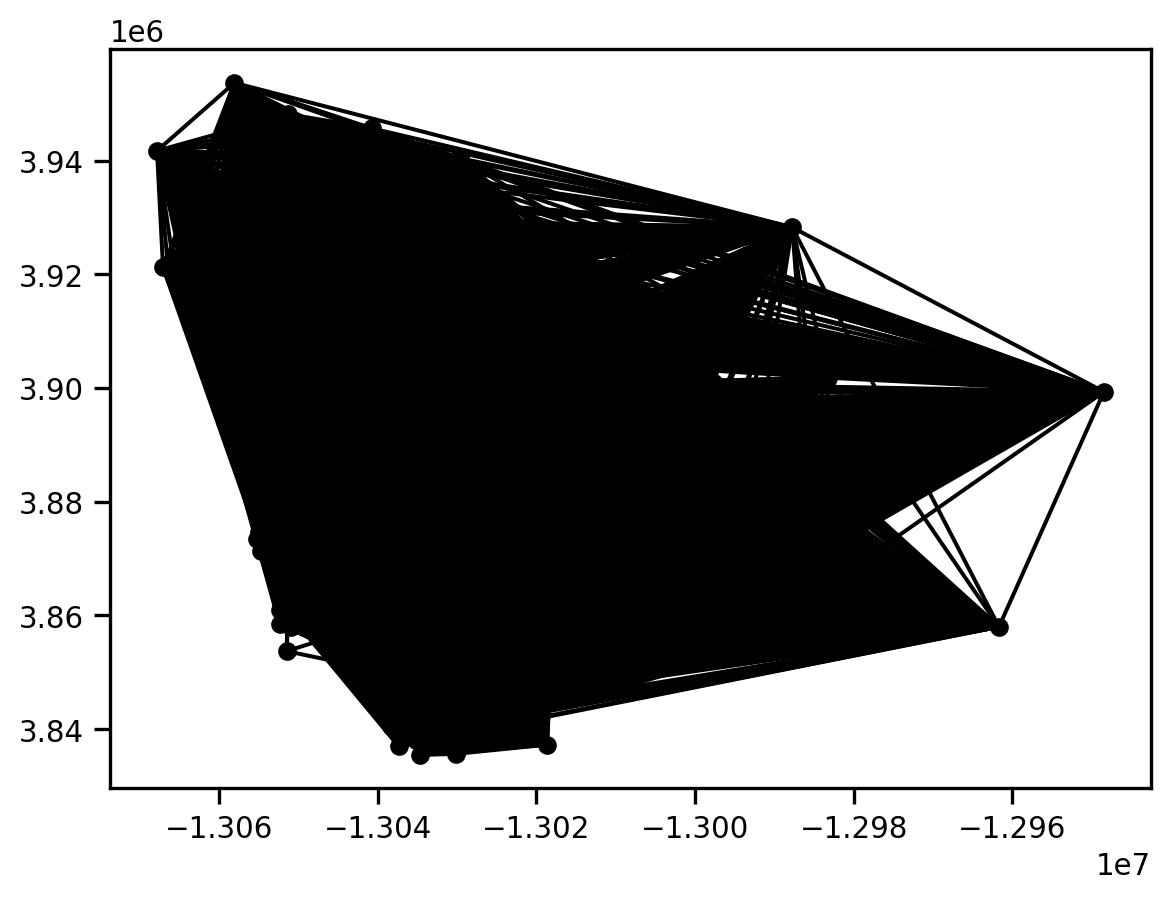

import libpysal

print(libpysal.__version__)

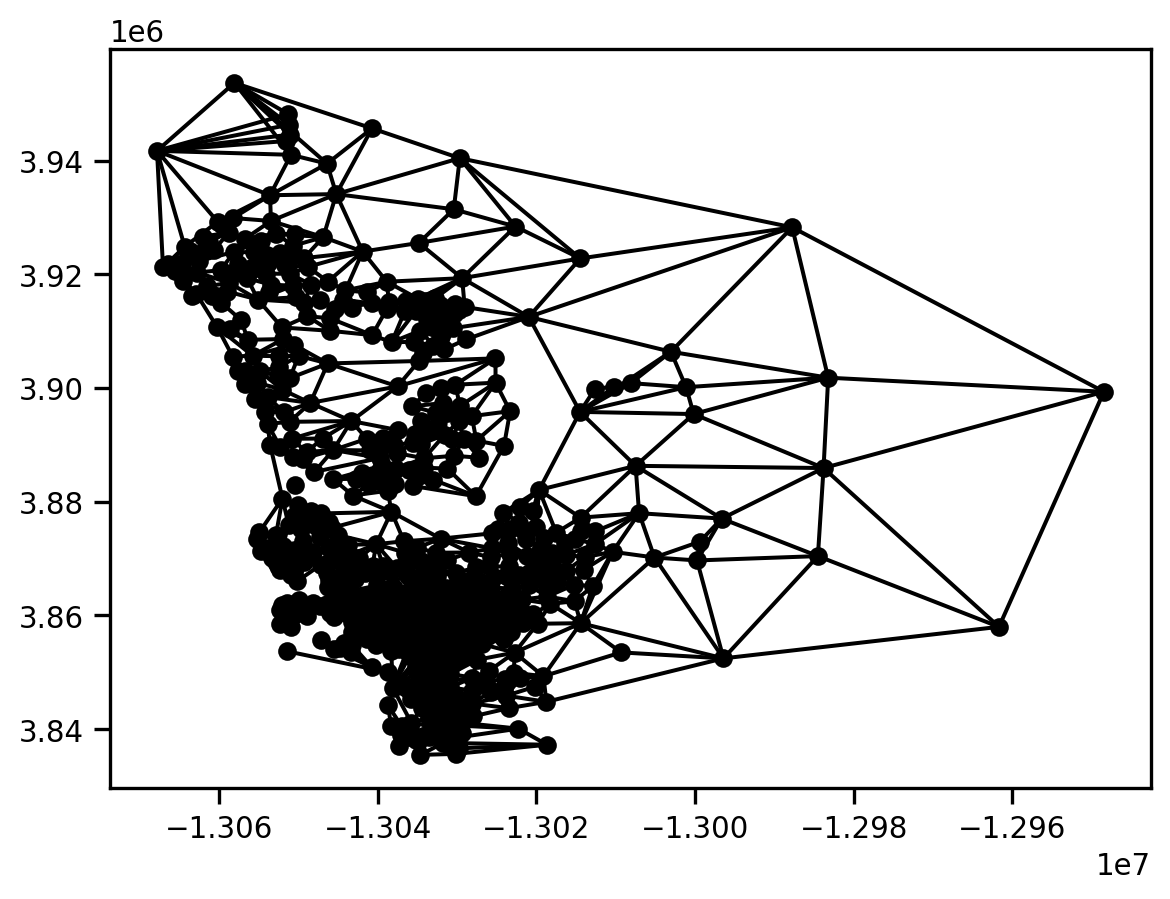

g = libpysal.graph.Graph.build_block_contiguity(san_diego.cluster_type)

print(san_diego.shape, g.n)

g.plot(san_diego)4.12.1

(627, 196) 627

This is a very dense graph, as any pair of polygons that fall in the same cluster type are considered to be neighbors.

We can get some useful summary information from this graph:

g.summary()| Number of nodes: | 627 |

| Number of edges: | 174612 |

| Number of connected components: | 4 |

| Number of isolates: | 0 |

| Number of non-zero edges: | 174612 |

| Percentage of non-zero edges: | 44.42% |

| Number of asymmetries: | NA |

| S0: | 174612 | GG: | 174612 |

| S1: | 349224 | G'G: | 174612 |

| S3: | 235288056 | G'G + GG: | 349224 |

['06073014901', '06073000300', '06073000800', '0607301850...]

The key thing to note here is that the graph has 4 connected components. These correspond to the four cluster types in our map from above.

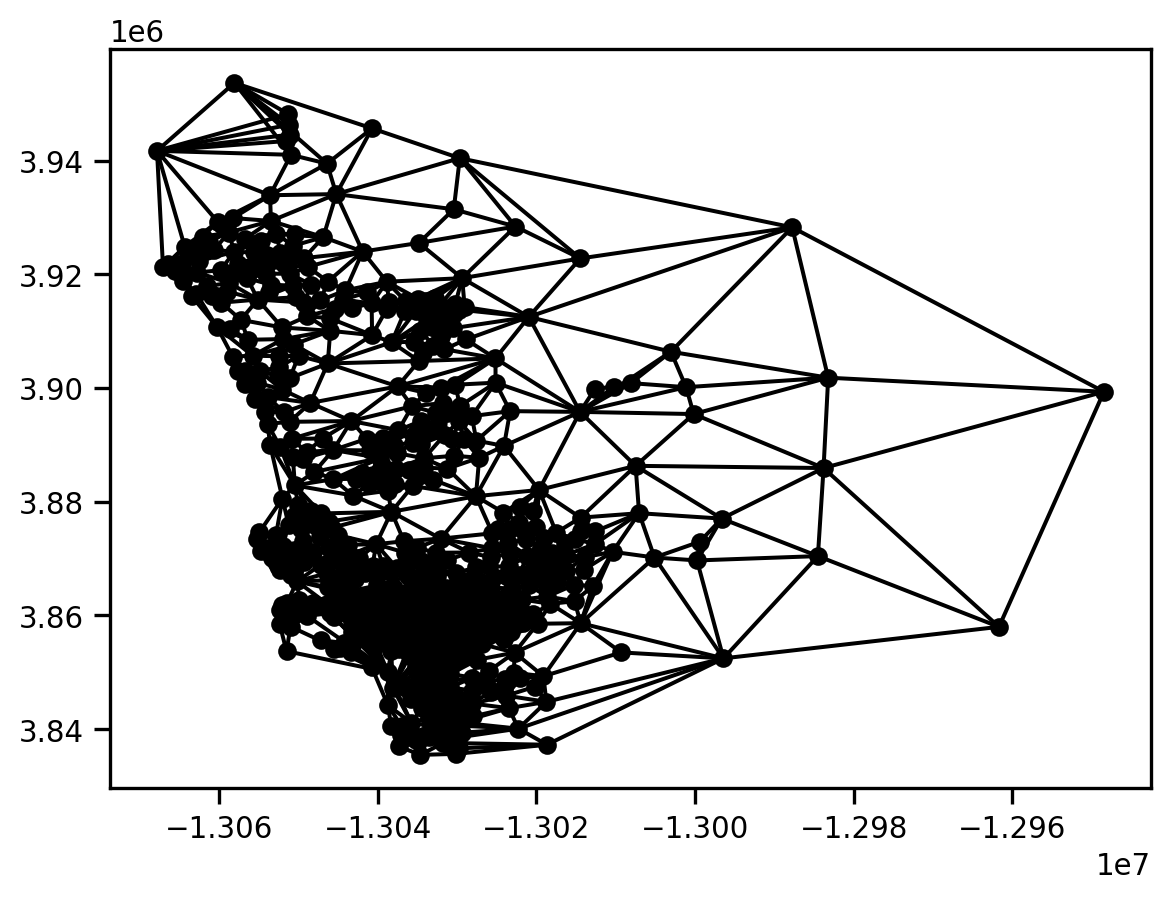

We can build a similar neighbor graph for our Queen contiguity neighbors:

gq = libpysal.graph.Graph.build_contiguity(san_diego)

gq.plot(san_diego)

This is less dense than the Cluster graph.

gq.summary()| Number of nodes: | 627 |

| Number of edges: | 3376 |

| Number of connected components: | 1 |

| Number of isolates: | 0 |

| Number of non-zero edges: | 3376 |

| Percentage of non-zero edges: | 0.86% |

| Number of asymmetries: | NA |

| S0: | 3376 | GG: | 3376 |

| S1: | 6752 | G'G: | 3376 |

| S3: | 80192 | G'G + GG: | 6752 |

['06073014901', '06073000300', '06073000800', '0607300220...]

Note that the Queen graph has a single connected component.

With these two graphs in hand, a spatial component will be defined as a connected component in the graph that is the intersection of these two graphs:

gint = gq.intersection(g)

gint.plot(san_diego)

gint.summary()| Number of nodes: | 627 |

| Number of edges: | 2646 |

| Number of connected components: | 27 |

| Number of isolates: | 15 |

| Number of non-zero edges: | 2646 |

| Percentage of non-zero edges: | 0.68% |

| Number of asymmetries: | NA |

| S0: | 2646 | GG: | 2646 |

| S1: | 5292 | G'G: | 2646 |

| S3: | 52952 | G'G + GG: | 5292 |

['06073014901', '06073000300', '06073000800', '0607300220...]

We can determine that there are 27 connected components in the intersection graph. This means that we have 27 spatial clusters.

We can assign the component labels from the intersection graph to our geodataframe and then explore the spatial distribution of these connected components:

san_diego['spatial_clusters'] = gint.component_labels

san_diego.explore(column='spatial_clusters', categorical=True, legend=True,

tooltip=['cluster_type', 'spatial_clusters'])Because of the limitation of the number of unique colors available in geopandas’ current implementation, this makes it difficult to pull out each of the 27 different spatial clusters.

To get a better view of things, we can focus on the census tract with index 9 that we saw above:

san_diego.iloc[9]n_asian_under_15 5.0

n_black_under_15 24.0

n_hispanic_under_15 982.0

n_native_under_15 14.0

n_white_under_15 274.0

...

geometry POLYGON ((-13031716.599895265 3914330.02083623...

lag_median_pri 238153.651685

yb 0 Low

cluster_type 3

spatial_clusters 3

Name: 06073020206, Length: 197, dtype: objectHere we see that is is of cluster type 3, and happens to be in the spatial cluster with the label 3.

We can view the intersection of the two graphs focusing on this particular tract as follows:

m = san_diego.loc[gint['06073020206'].index].explore(color="#25b497", tooltip=['cluster_type', 'spatial_clusters'])

san_diego.loc[['06073020206']].explore(m=m, color="#fa94a5")

gint.explore(san_diego, m=m, focal='06073020206', tooltip=['cluster_type', 'spatial_clusters'])This shows that the census tract has four neighbors.

To see where this actually is with respect to the set of clusters of type 3, we isolate on those:

san_diego[san_diego.cluster_type==3].explore() # cold-spotsAnd, now we can embed tract 9 and its neighbors in this wider context:

m = san_diego[san_diego.cluster_type==3].explore(column='spatial_clusters', categorical=True, legend=True,

tooltip=['cluster_type', 'spatial_clusters'])

gint.explore(san_diego, m=m, focal='06073020206', tooltip=['cluster_type', 'spatial_clusters'])Cluster type 3 (cold spot) accounts for 7 of the 27 spatial clusters.